Visual Regression Testing Part 2: Extending Grunt-PhantomCSS for Multiple Environments

Visual Regression Testing Part 2: Extending Grunt-PhantomCSS for Multiple Environments

Phase2 | Digital Agency

June 22, 2015

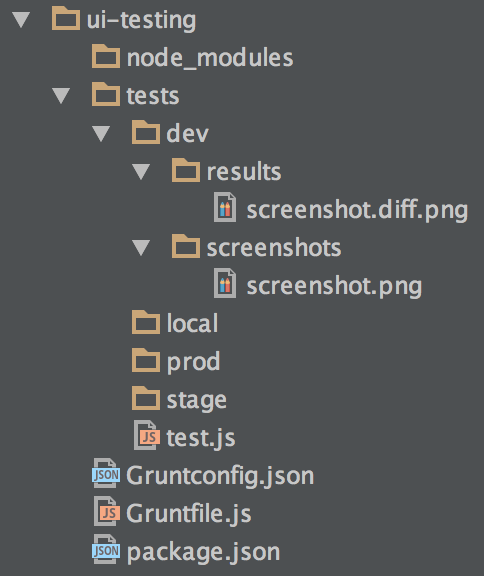

Earlier this month, I explored testing dynamic content with PhantomCSS in the first post of a multi-part blog series on visual regression testing. Today, I dive a little deeper into extending grunt-phantomcss for multiple environments. Automation is the name of the game, and so it was with our visual regression test suite. The set of visual regression tests that we created in part 1 of this series allowed us to test for cosmetic changes throughout the Department of Energy platform, but it was inconvenient to fit into our workflow. The GruntJS task runner and the Jenkins continuous integration tool were the answer to our needs. Here, in part 2, I’ll walk through how we set up Grunt and the updates we contributed to the open source grunt-phantomcss plugin. Grunt is widely used nowadays, allowing developers to script repetitive tasks, and with the increasingly large repository of plugins it would be crazy not to take advantage of it. Once installed, typically through NPM, we set up a Gruntfile containing the list of tasks available to developers to execute through the command line. Our primary task would not only be in charge of running all of the visual regression tests, but also allow us to specify the environment we wished to run our tests against. With DOE, we have four such environments: local development environments, an integration environment, a staging environment, and our production environment. We needed the ability to maintain multiple sets of baselines and test results, one for each environment. Achieving this required an extension of Micah Godbolt’s fork of grunt-phantomcss. The grunt-phantomcss plugin establishes a PhantomCSS task to run the test suite. In the original plugin all baselines and results are stored in two top-level directories, but this is not ideal because it conflicts with the notion of modularity. Micah Godbolts’s fork stores each test’s baseline(s) and result(s) in the directory of the test file itself, keeping the baselines, results, and tests together in a modular directory structure with less coupling between the tests. This made Micah’s fork a great starting point for us to build upon. Adding it to our repo was as easy as adding it to our package.json and running npm install.

Grunt

After mocking up our Gruntfile in the grunt-phantomcss documentation, we needed to specify the environment to run our visual regression test suite against. We needed the ability to pass a parameter to Grunt through the command line to allow us to execute a command such as the one below.

| 1 | grunt phantom:production |

First, we needed to establish the URL associated with each environment. Rather than hard-coding this into the Gruntfile we created a small Gruntconfig JSON file of key-values, matching each environment to its URL. This allows other developers to easily change the URL depending on their environmental specifications.

1 2 3 4 5 6 7 8 | { "rootUrls": { "local": "http://energy.local/", "integration": "http://energy.integration/", "stage": "http://energy.stage/", "production": "http://energy.gov/" } } |

Importing the key-value pairs from JSON into our Gruntfile was as easy as a single readJSON function call.

| 1 | var config = grunt.file.readJSON('Gruntconfig.json'); |

Next, we needed a Grunt task that would accept an environment parameter and pass it through to grunt-phantomcss. This way CasperJS could store these baselines and results in a particular directory for the environment specified. We achieved this by creating a parent task, “phantom,” that would accept the env parameter, set the grunt-phantomcss results and baselines options, as well as a new rootUrl option, and then call the “phantom-css” task.

1 2 3 4 5 6 7 8 9 | grunt.registerTask('phantom', function(env) { env = (typeof env !== 'undefined' ? env : 'prod');

// Specify environment and directories grunt.config.set('phantomcss.options.rootUrl', config.rootUrls[env]); grunt.config.set('phantomcss.options.screenshots', env); grunt.config.set('phantomcss.options.results', env); grunt.task.run('phantomcss'); }); |

The rootUrl option is what eventually passes to CasperJS in each test file to prepend to the relative URL of each page we visit.

Extending Grunt-PhantomCSS

Now that the Gruntfile was set up for multiple environments, we just needed to update the grunt-phantomcss plugin. With Micah’s collaboration we added a rootUrl variable to the PhantomJS test runner that would accept the rootUrl option from our Gruntfile and pass it to each test.

1 2 3 4 5 6 7 8 9 10 | // Variable object to set default values for options var options = this.options({ rootUrl: false, screenshots: 'screenshots', results: 'results', viewportSize: [1280, 800], mismatchTolerance: 0.05, waitTimeout: 5000, logLevel: 'warning' }); |

1 2 3 4 5 6 7 8 | casper.start();

// Run the test scenarios args.test.forEach(function(testSuite) { phantom.casperTest = true; phantom.rootUrl = args.rootUrl; … }); |

We made sure to maintain backwards compatibility here by keeping the rootUrl directive optional so old integrations of the grunt-phantomcss plugin would not be adversely affected by our updates. Now almost there, the final step was to update our tests to account for the new rootUrl variable. Here we prepend the rootUrl to the now relative page url.

1 2 3 4 5 6 7 8 9 10 | casper.thenOpen(phantom.url + <page-url>) this.evaluate(function() { jQuery('#page-wrapper .block').css('background', 'gray').css('border', '1px solid black'); jQuery('.block-bean > *').css('opacity', '0'); jQuery('.block-bean').css('height', '200px'); }); }) .then(function() { phantomcss.screenshot('#page-wrapper', <test-name>); }); |

With the grunt-phantomcss plugin updated, we were able to run our visual regression tests against multiple environments, depending on our needs. We could run tests after pull requests were merged into development. We could run tests after every deployment to staging and production. We could even run tests locally against our development environments as desired.

Bonus: Tests for Mobile

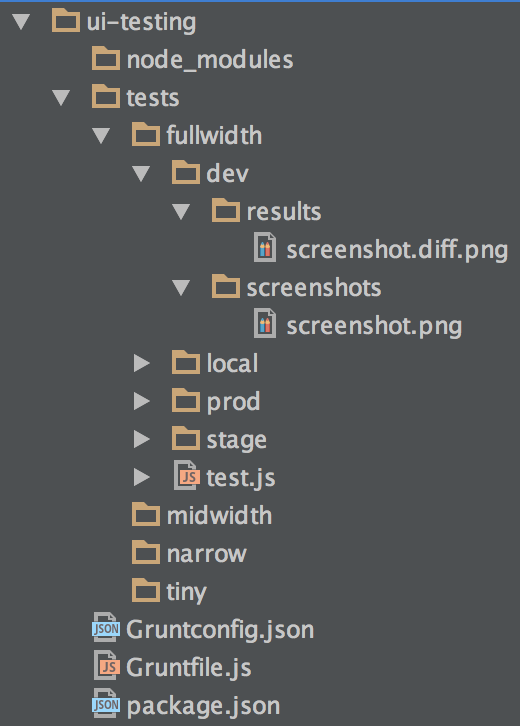

After all our success thus far, we wanted to add the ability to specify the viewport to our Gruntfile. We have particular tests for each of our four breakpoints: full-size for desktops, mid-size for large tablets, narrow for “phablets”, and tiny for phones. This was an easy lift, just requiring a few more tweaks to our Gruntfile.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 | grunt.initConfig({ phantomcss: { options: { mismatchTolerance: 0.05, screenshots: 'production', results: 'production', rootUrl: config.rootUrls.production, }, fullwidth: { options: { viewportSize: [1280, 800], }, src: ['./tests/fullwidth/*.js'] }, midwidth: { options: { viewportSize: [768, 1024], }, src: ['./tests/midwidth/*.js'] }, narrow: { options: { viewportSize: [600, 960], }, src: ['./tests/narrow/*.js'] }, tiny: { options: { viewportSize: [375, 667], }, src: ['./tests/tiny/*.js'] } } }); |

Here we set up four sub-tasks within the “phantomcss” task, one for each breakpoint. Each subtask specifies the viewport size and the location of the associated test files. Then we updated our parent task “phantom” to take two arguments: an environment parameter and a breakpoint parameter. Both also needed defaults in case either argument was not specified. Additionally, we didn’t want a single test failing to halt the execution of the rest of our tests, so we added the grunt-continue plugin to our package.json. Grunt-continue essentially allows all tests to run regardless of errors, but will still cause the overall “phantom” task to fail in the end if a single test fails. Here is what our new “phantom” task looks like:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 | grunt.registerTask('phantom', function(env, breakpoint) { env = (typeof env !== 'undefined' ? env : 'prod');

// Specify environment and directories grunt.config.set('phantomcss.options.rootUrl', config.rootUrls[env]); grunt.config.set('phantomcss.options.screenshots', env); grunt.config.set('phantomcss.options.results', env);

// Specify breakpoint else test all if (typeof breakpoint !== 'undefined') { grunt.task.run('phantomcss:' + breakpoint); } else { // Using grunt-continue to run all sub-tasks regardless of errors // We want the grunt job to fail in the end if any tests failed. // Since grunt-continue turns errors into warnings we will fail if any warnings are thrown. grunt.task.run('continue:on'); grunt.task.run('phantomcss'); grunt.task.run('continue:off'); grunt.task.run('continue:fail-on-warning'); } }); |

It was a success! Through the power and versatility of Grunt and the various open source plugins tailored for it, we were able to achieve significant automation of our visual regression tests. We were happy with our new ability to test across a range of environments, combating regressions and ensuring our environments are kept in a stable state. But we hadn’t reached our full potential yet. The workflow wasn’t fully automatic; we still had to manually kick off these visual regression tests periodically, and that’s no fun. The final piece of the puzzle would be the Jenkins continuous integration tool, which I will be discussing in the final part of this Department of Energy Visual Regression Testing series.