Selenium IDE: Automated Testing Made Easy

Selenium IDE: Automated Testing Made Easy

Thomas Neff | Associate Product Manager

August 19, 2013

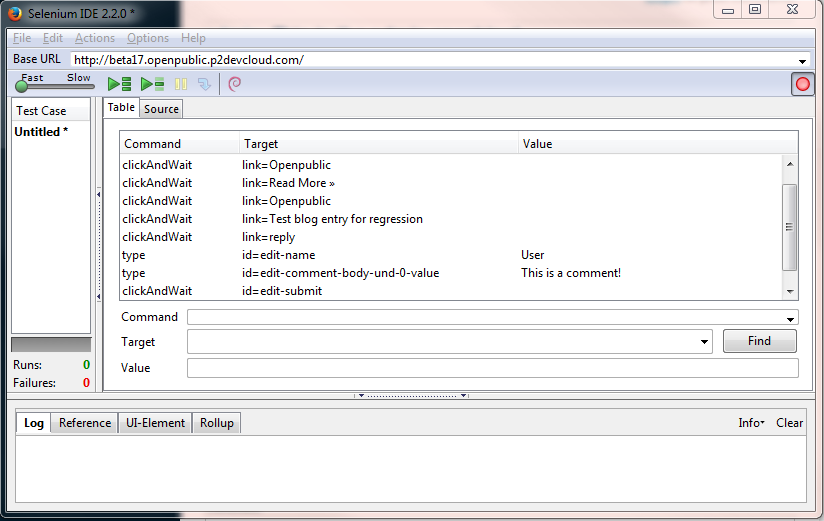

Have you heard a lot about automated testing, but unsure about how to use it to aid in your QA process? Want to use automated tests, but lack the necessary programming skills to roll out your own tests? Then the folks at the Selenium project have created just the tool for you! For those of you who have experimented with browser automation and automated testing, Selenium will undoubtedly be familiar. For those who haven’t encountered it before, Selenium is a software testing framework for the web that facilitates the automation of browsers. The Selenium project produces two tools, the Selenium IDE and Selenium WebDriver (a more powerful and open ended tool that allows the user to script a huge variety of testing interactions. For more info see here). The Selenium IDE is a simple but powerful Firefox extension that let's users record and replay sets of browser interactions as test cases.

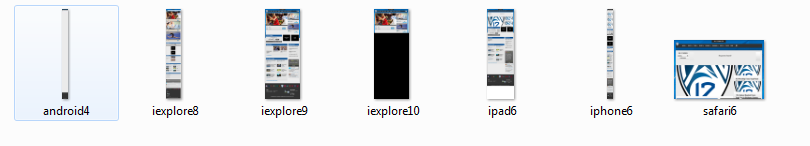

For my first experiment with the IDE, I was able to create a test case that would generate a piece of test content on a Drupal 7 based site. This success led me to consider the possibility of creating an automated test suite which when run, would stand up a full set of test content for any new environment on a site. Furthermore, it clearly becomes possible to record a given test interaction, and then save that test case for on demand regression testing of the same feature. Also for those who have experience working with Selenium WebDriver, the IDE allows you to export test cases in a variety of languages for use through the WebDriver. By using the IDE and WebDriver in conjunction with a third party browser emulation service (Saucelabs supports the Selenium project and provides in depth tutorials on writing Selenium scripts and running them through their service), it's possible to almost fully automate cross-browser testing. On our project for the Georgia Technology Authority, I used a script to capture screenshots of 48 pages in 8 different browsers, and on 3 different mobile devices (see the image below for an example of the output from the script). I then only had to compare the screenshots for differences compared to our established baseline (in this case Chrome) in order to verify that the site was behaving well in all supported browsers. Including the hour it took to run the script, this turned a task which initially took two days to run manually into a task that could be completed in half a day.

I should note that the system is not perfect. While experimenting with the IDE, I occasionally had to manually input steps that the record function had missed, but the tool makes it very easy to do so by allowing editing of individual interactions, and the addition of new interactions using an easy to understand set of commands, and (usually) easy to find element IDs. For those interested in using the WebDriver tool, I should warn you that the tool has a relatively steep learning curve, and requires a reasonable level of programming knowledge in order to get started. To learn more about testing in Drupal, check out Erik Summerfield's blog post: "Test Driven Drupal"